This week we will hit a milestone we thought we might not ever reach – selling ColorHug device number 2000. We started making ColorHugs just 18 months ago and have come a long way from the first batch that was hand-built on a desk in our back bedroom. I started the project as a hobby to make some embedded hardware as it was something I enjoyed doing at University and hadn’t done for a while. I assured my wife we wouldn’t need to make more then 50. So imagine how we felt when we got over 800 responses to a single blog post. I only wrote it to check it was worth making that initial batch! So this hobby turned into a second job almost overnight that came with all the fun dealing with customer emails, legal issues and setting up a business that pays tax. Ania was not best pleased when our lovely guest room turned into our manufacturing department and my “hobby” had her screwing devices together on weekends, evenings and even on Boxing Day.

A lot has changed since that first batch. From outsourcing the PCB fabrication and to occasionally recruiting my mum, dad and of course Ania in the manufacture, assembly, dispatch and administrative aspects of ColorHug. We finally have a new outside office – so after nearly two years we have our house back! And most importantly we have had a little girl, who keeps us very busy and thus has slowed the development of the ColorHug Spectro.

Building an OpenHardware device has definitely been a worthwhile pursuit and something I believe in. We will have built and shipped 2000 devices all around the world, including issuing two lots of free gifts to update early adopters with the latest accessories and design improvements. We’ve also built a large community who are using ColorHugs all over the world for calibrating external screens and panels in domestic and commercial settings.

We still don’t make much profit on each unit and definitely wouldn’t recommend making calibration hardware as a get rich quick scheme, but we have enjoyed growing ColorHug and fostering the community that has built up around it. We’d like to take this opportunity to thank everyone who has helped make ColorHug a success – from those who’ve helped make the device through to those who contribute in the community by testing and reporting bugs.

Some interesting statistics from the last 18 months:

- Total Sold: 1998

- Number of Batches: 8

- Typical number of jiffy bags ready to go at any time: 60

- Number of returns: 8

- Number of automated emails from PayPal: 2520

- Number of emails Ania and I have sent (most semi-automated): 5087

- Number of LiveCD updates: 9

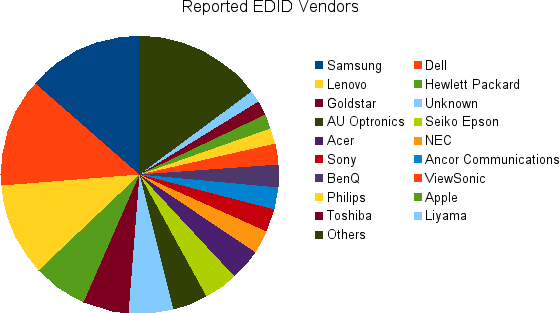

- Number of different countries sent to: 21

- Amount of money spent on postage: £9,354

So, basically, we’re very humbled and grateful! Richard, Ania and baby Hughes.