Artist, gamers, rejoice! GNOME Shell 42 will let applications handle input events at the full input device rate.

It’s a long story

Traditionally, GNOME Shell has been compressing pointer motion events so its handling is synchronized to the monitor refresh rate, this means applications would typically see approximately 60 events per second (or 144 if you follow the trends).

This trait inherited from the early days of Clutter was not just a shortcut, handling motion events implies looking up the actor that is beneath the pointer (mainly so we know which actor to send the event to) and that was an expensive enough operation that it made sense to do with the lowest frequency possible. If you are a recurrent reader of this blog you might remember how this area got great improvements in the past.

But that alone is not enough, motion events can also end up handled in JS land, and it is in the best interest of GNOME Shell (and people complaining about frame loss) that we don’t need to jump into the JavaScript machinery too often in the course of a frame. This again makes sense to keep to a minimum.

Who wants it different?

Applications typically don’t care a lot about motion events, beyond keeping up with the frame rate. Others however have a stronger reliance on motion event data that this event compression is suboptimal.

Some examples where sending input events at the device rate matters:

- Applications that use device input for velocity/direction/acceleration calculations (e.g. a drawing app applying a brush effect) want as much granularity as it is possible, compressing events is going to smooth values and tamper with those calculations.

- Applications that render more often than the frame rate (e.g. games with vsync off) may spend multiple frames without seeing a motion event. Many of those are also timing sensitive, and not just want as much granularity as possible, but also want the events to be delivered as fast as possible.

How crazy is crazy?

As mentioned, events are now sent at the input device rate, but… what rate is that? This starts at tens of times per second on cheap devices, up to the lower hundred-or-so in your regular laptop touchpad, to the low hundreds on drawing tablets.

But enter the gamer, high end gaming mice have an input frequency of 1000Hz, which means there are approximately 16 events per frame (in the typical case of a 60Hz display) that must get through to the application ASAP. This usecase is significantly more demanding than the others, and not by a small margin.

A look under the hood

Having to look up the actor beneath the pointer 1000 times a second (16x as often) means it doesn’t suffice to avoid GPU based picking in favor of SIMD operations, there has to be a very aggressive form of caching as well.

To keep the required calculations to a minimum, Mutter now caches a set of rectangles that approximates the visible, uncovered area of the actor beneath the pointer. These are in the same coordinate space than input events so comparisons are direct. If the pointer moves outside the expressed region or the cache is dropped by other means (e.g. a relayout), the actor is looked up again and the new area cached.

This is of course most optimal when the actors are big, with pointer picking virtually dropping to 0 on e.g. fullscreen clients, but it helps even when blazing your pointer across many actors in the screen. Crossing a button from left to right can take a surprising amount of events.

But what about JavaScript? Would it maybe trigger a thousand times a second? Absolutely not, events as handled within Clutter (and GNOME Shell actors) are still motion compressed. This unthrottled event delivery only applies in the direction of Wayland clients.

There were other areas that got indirectly stressed by the many additional events, there’s been a number of optimizations across the board so it doesn’t turn bad even when Mutter is doing so much more.

How does it feel?

This is something you’d have to check for yourself. But we can show you how this looks!

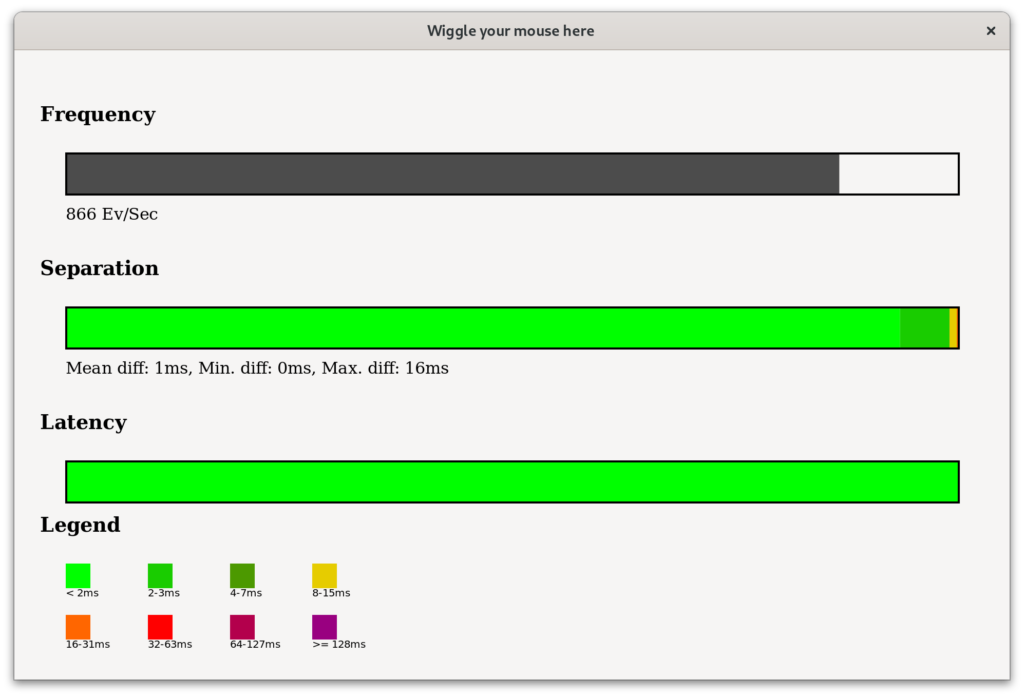

This is a quick and dirty test application that displays timing information about the received events. Some takeaways from this:

- Due to human limitations, it is next to impossible to produce a steady 1000Hz input rate for a full second. Moving the mouse left and right wastes precious milliseconds decelerating to accelerate again, even drawing the most perfect circle is too slow to have them need one event per millisecond. The devices are capable of shorter 1000Hz bursts though.

- The separation between events (i.e. the time difference between the current and last events as received by the client) is predominantly sub-frame. There is only some larger separation when Mutter is busy putting images onscreen.

- The event latency (time elapsed between emission by the hw/kernel and reception by the application) is <2ms in most cases. There are surely a few events that take a longer time to the application, but it is essentially noise.

Gamers (and other people that care about responsiveness) should notice this as “less janky”.

Didn’t drawing tablets have this before?

Yes and no. Mutter skipped motion compression altogether for drawing tablets, since the applications interested in these really preferred the extra events despite the drawbacks. With these changes in place, drawing tablet users will purely benefit of the improved performance.

Why so loooong

If you have been following GNOME Shell development, you might have heard about this change before. Why it took so long to have this merged?

The showstopper was probably what you would suspect the least: applications that are not handling events. If an application is not reading events in time (is temporarily blocking the main loop, frozen, slow, in a breakpoint, …), these events will queue up.

But this queue is not infinite, the client would eventually be shutdown by the compositor. With these input devices that could take a long… less than half a second. Clearly, there had to be a solution in place before we rolled this in.

There’s been some back and forth here, and several proposed solutions. The applied fix is robust, but unfortunately still temporary, a better solution is being proposed at the Wayland library level but it’s unlikely to be ready before GNOME 42. In the mean time , users can happily shake their input devices without thinking how many times a second is enough.

Until the next adventure!