Interesting discussion on pgo about embeddable scripting engines. Another option to experiment with is Syx Smalltalk, created by fellow Smalltalker Luca Bruno. It has full support for embedding and is quite portable as well.

Smalltalk can do closures as well. In Smalltalk, closures are known as blocks and are used for just about everything, from enumerating through collections to exception handling. Even logic control flow (if, while, etc) are implemented using blocks. For example, loops are implemented by sending the #whileTrue message (amongst others) to a block. The block is then executed repeatedly until it returns false. The beauty of this approach is that one can build custom user-defined control structures.

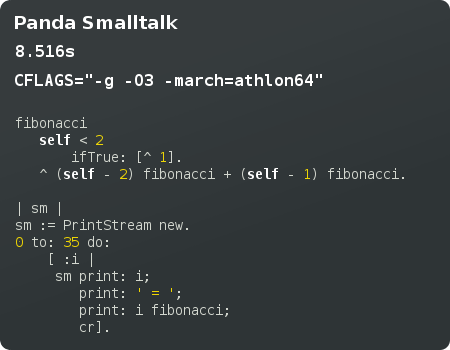

The only niggle is that classic Smalltalk-80 closures are not totally re-entrant, and piggy-back off their enclosing method activation. I intend to fix this in Panda Smalltalk, and I believe it has been fixed in other VMs as well.

Meta-Object Protocols

Thought a lot about Smalltalk’s meta-system recently, particularly in how its meta-level is explicitly separated from the base level. This is realized in the form of class objects which describe all objects in the system. Even classes themselves are instances of classes. This results in an elaborate unbounded metaclass hierarchy, of infinite regress. The Metaclass class is an instance of an instance of itself!

On the other hand, Self, Io and Javascript did away with the notion of classes. In these prototype-based languages, the only way to know an object is to send messages to it and see how it responds. One creates a new object by cloning another. Messages to a clone can optionally be delegated to its parent (the object from which it was cloned).

This is a monistic approach compared to Smalltalk’s dualistic form. Naturally, it makes the object system a bit more flexible and lightweight. No need to create a class just to represent a singleton instance. By manipulating the protos slot, one can even have multiple inheritance (as supported in Self and Io).

Eliminating classes provides some other useful benefits. Classes represent static (global) state, which raises thorny issues related to concurrent access and security.

Not everything about prototypes is rosy though. To compensate for the lack of classes (which are useful for describing shared behavior), Self and Io came up with traits objects. These are normal objects like any other, yet act as a repository for common state and behavior. Cloning these objects is akin to creating an instance of a class. The problem with trait objects is that they can respond to messages which are not meant for them (usually resulting in a VM exception).

Its not clear-cut whether prototypes are better than classes, or vice versa. At least one can emulate prototypes in Smalltalk with a bit of meta-object hackery.

Opinions on Concurrency

I am quite excited about Io‘s actor-based concurrency model. I think it would be great fit for Smalltalk, and I am keen to implement it in Panda Smalltalk. Io’s design seems much more conceptually sound than Smalltalk-80’s concurrency model (simple user-level threads). It really does fit in the notion of objects sending messages to other objects. With an actor scheme, objects will be able to send asynchronous messages, which return immediately and never block. The result of an async message is always a future object. This kind of object is essentially a proxy for the real value of a method when it is invoked some time in the future by the scheduler. However, sending a message to a future will result in blocking until the real value becomes available.

Io’s actors are built upon lightweight threads, which is an aspect I also like. I would rather not make Panda’s VM fully multi-threaded, as it would add way too much complexity and non-determinism for my liking. I can get around the problems of blocking IO by using select() or some other IO multiplexer.

Multi-VMs seem to be all the rage now, having been implemented in Io and Rubinius. For VM laymen, multi-VMs are virtual machines which support multiple lightweight processes, each running in their own native thread. Each lightweight process has its own heap, garbage collector, and interpreter instance. They are thus distinct from lightweight threads.

They were experimented with in Java, as a means to reduce startup time by not having to spend time JIT-compiling that obscenely large class library multiple times. I believe Emacs has something similar. Erlang has them as well, but rather as a means of implementing scalable concurrency (think thousands of independent processes!).

I for one am not convinced of their benefit (at least in the context of Smalltalk). They have all the disadvantages of shared-nothing processes with few of the advantages. A crash in one of the lightweight processes will still bring down the entire VM. I think they make sense in Erlang, where a lot of static state is eliminated, such that forking a lightweight process is quite a cheap operation. In Smalltalk, all classes and other static state would have to be copied to the new process. This is a bit too expensive for my liking.