As I mentioned in my talk at Scale 17x, if you aren’t using containers for building your application yet, it’s likely you will in the not-so-distant future. Moving towards an immutable base OS is a very likely future because the security advantages are so compelling. With that, comes a need for a mutable playground, and containers generally fit the bill. This is something I saw coming when I started making Builder so we have had a number of container abstractions for some time.

With growing distributions, like Fedora’s freshly released Silverblue, it becomes even more necessary to push those container boundaries soon.

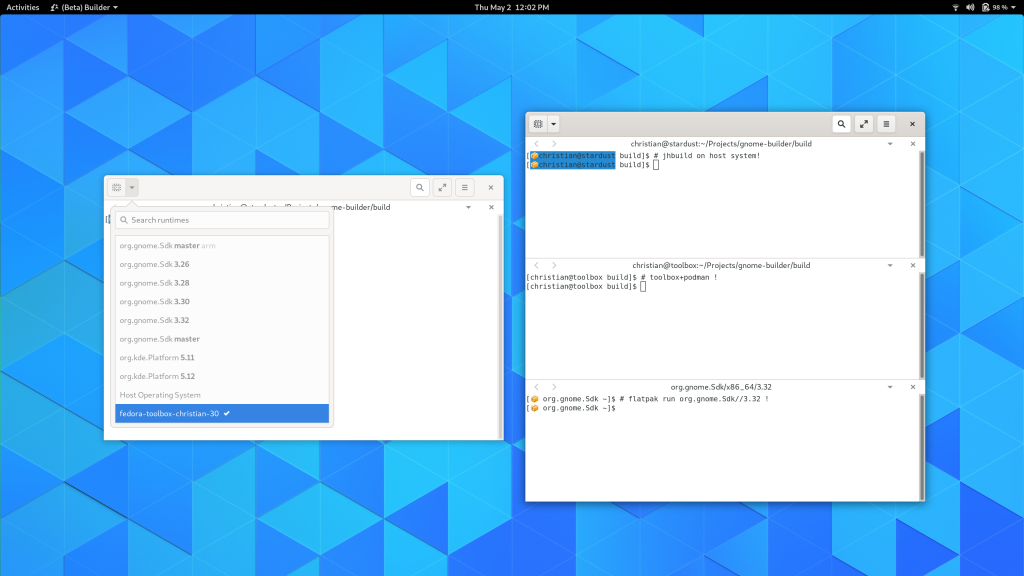

This week I started playing with some new ideas for a terminal workspace in Builder. The goal is to be a bit of a swiss-army knife for container oriented development. I think there is a lot we can offer by building on the rest of Builder, even if you’re primary programming workhorse is a terminal and the IDE experience is not for you. Plumbing is plumbing is plumbing.

I’m interested in getting feedback on how developers are using containers to do their development. If that is something that you’re interested in sharing with me, send me an email (details here) that is as concise as possible. It will help me find the common denominators for good abstractions.

What I find neat, is that using the abstractions in Builder, you can make a container-focused terminal in a couple of hours of tinkering.

I also started looking into Vagrant integration and now the basics work. But we’ll have to introduce a few hooks into the build pipeline based on how projects want to be compiled. In most cases, it seems the VMs are used to push the app (and less about compiling) with dynamic languages, but I have no idea how pervasive that is. I’m curious how projects that are compiling in the VM/container deal with synchronizing code.

Another refactor that needs to be done is to give plugins insight into whether or not they can pass file-descriptors to the target. Passing a FD over SSH is not possible (although in some cases can be emulated) so plugins like gdb will have to come up with alternate mechanisms in that scenario.

I will say that trying out vagrant has been a fairly disappointing experience compared to our Flatpak workflow in Builder. The number of ways it can break is underwhelming and the performance reminds me of my days working on virtualization technology. It makes me feel even more confident in the Flatpak architecture for desktop developer tooling.