As I’ve already announced internally, I’m stepping down from putting together an STF application for this year. For inquiries about the 2025 application, please contact Adrian Vovk going forward. This is independent of the 2024 STF project, which we’re still in the process of wrapping up. I’m sticking around for that until the end.

The topic of this blog post is not the only reason I’m stepping down but it is an important one, and I thought some of this is general enough to be worth discussing more widely.

In the context of the Foundation issues we’ve had throughout the STF project I’ve been thinking a lot about what structures are best suited for collectively funding and organizing development, especially in the context of a huge self-organized project like GNOME. There are a lot of aspects to this, e.g. I hadn’t quite realized just how important having a motivated, talented organizer like Sonny is to successfully delivering a complex project. But the specific area I want to talk about here is how power and responsibilities should be split up between different entities across the community.

This is my personal view, based on having worked on GNOME in a variety of structures over the years (volunteer, employee, freelancer, for- and non-profit, worked under grants, organized grants, etc.). I don’t have any easy answers, but I wanted to share how my perspective has shifted as a result of the events of the past year, which I hope will contribute to the wider ongoing discussion around this.

A Short History

Unlike many other user-facing free software projects, GNOME had strong corporate involvement since early on in its history, with many different product companies and consultancies paying people to work on various parts of it. The project grew up during the Dotcom bubble (younger readers may not remember this, but “Linux” was the “AI” of that era), and many of our structures date back to this time.

The Foundation was created in those early days as a neutral organization to hold resources that should not belong to any one of the companies involved (such as the trademark, donation money, or the development infrastructure). A lot of checks and balances were put in place to avoid one group taking over the Foundation or the Foundation itself overshadowing other players. For example, hiring developers via the Foundation was an explicit non-goal, advisory board companies do not get a say in the project’s technical direction, and there is a limit to how many employees of any single company can be on the board. See this episode of Emmanuele Bassi’s History of GNOME Podcast for more details.

The Dotcom bubble burst and some of those early companies died, but there continued to be significant corporate investment, e.g. from enterprise desktop companies like Sun, and then later via companies from the mobile space during the hype cycles around netbooks, phones, and tablets around 2010.

Fast forward to today, this situation has changed drastically. In 2025 the desktop is not a growth area for anyone in the industry, and it hasn’t been in over a decade. Ever since the demise of Nokia and the consolidation of the iOS/Android duopoly, most of the money in the ecosystem has been in server and embedded use cases.

Today, corporate involvement in GNOME is limited to a handful of companies with an enterprise desktop business (e.g. Red Hat), and consultancies who mostly do low-level embedded work (e.g. Igalia with browsers, or Centricular with Gstreamer).

Retaining the Next Generation

While the current level of corporate investment, in combination with volunteer work from the wider community, have been enough to keep the project afloat in recent years, we have a pretty glaring issue with our new contributor pipeline: There are very few job openings in the field.

As a result, many people end up dropping out or reducing their involvement after they finish university. Others find jobs on adjacent technologies where they occasionally get work time for GNOME-related stuff, and put in a lot of volunteer time on top. Others still are freelancing, applying for grants, or trying to make Patreon work.

While I don’t have firm numbers, my sense is that the number of people in precarious situations like these has been going up since I got involved around 2015. The time when you could just get a job at Red Hat was already long gone when I joined, but for a while e.g. Endless and Purism had quite a few people doing interesting stuff.

In a sense this lack of corporate interest is not unusual for user-facing free software — maybe we’re just reverting to the mean. Public infrastructure simply isn’t commercially profitable. Many other projects, particularly ones without corporate use cases (e.g. Tor) have always been in this situation, and thus have always relied on grants and donations to fund their development. Others have newly moved in this direction in recent years with some success (e.g. Thunderbird).

Foundational Issues

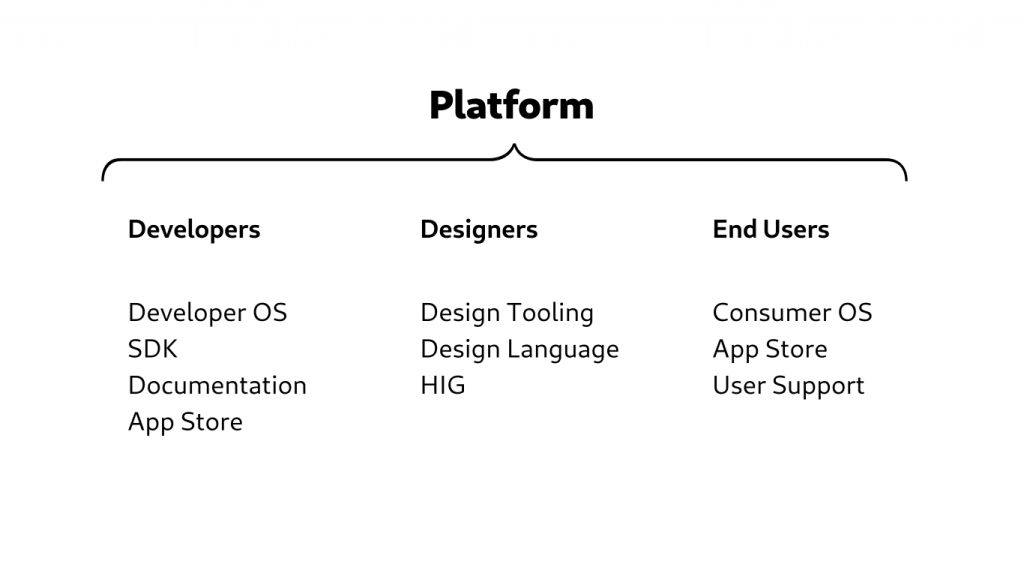

I think what many of us in the community have wanted to see for a while is exactly what Tor, Thunderbird, Blender et al. are doing: Start doing development at the Foundation, raise money for it via donations and grants, and grow the organization to pick up the slack from shrinking corporate involvement.

I know why this idea is so seductive to many of us, and has been for years. It’s in fact so popular, I found four board candidacies (1, 2, 3, 4) from the last few election cycles proposing something like it.

On paper, the Foundation seems perfect as the legal structure for this kind of initiative. It already exists, it has the same name as the wider project, and it already has the infrastructure to collect donations. Clearly all we need to do is to raise a bit more money, and then use that money to hire community members. Easy!

However, after having been in the trenches trying to make it work over the past year, I’m now convinced it’s a bad idea, for two reasons: Short/medium term the current structure doesn’t have the necessary capacity, and longer term there are too many risks involved if something goes wrong.

Lack of Capacity

Simply put, what we’ve experienced in the context of the STF project (and a few other initiatives) over the past year is that the Foundation in its current form is not set up to handle projects that require significant coordination or operational capacity. There are many reasons for this — historical, personal, structural — but my takeaway after this year is that there need to be major changes across many of the Foundation’s structures before this kind of thing is feasible.

Perhaps given enough time the Foundation could become an organization that can fund and coordinate significant development, but there is a second, more important reason why I no longer think that’s the right path.

Structural Risk

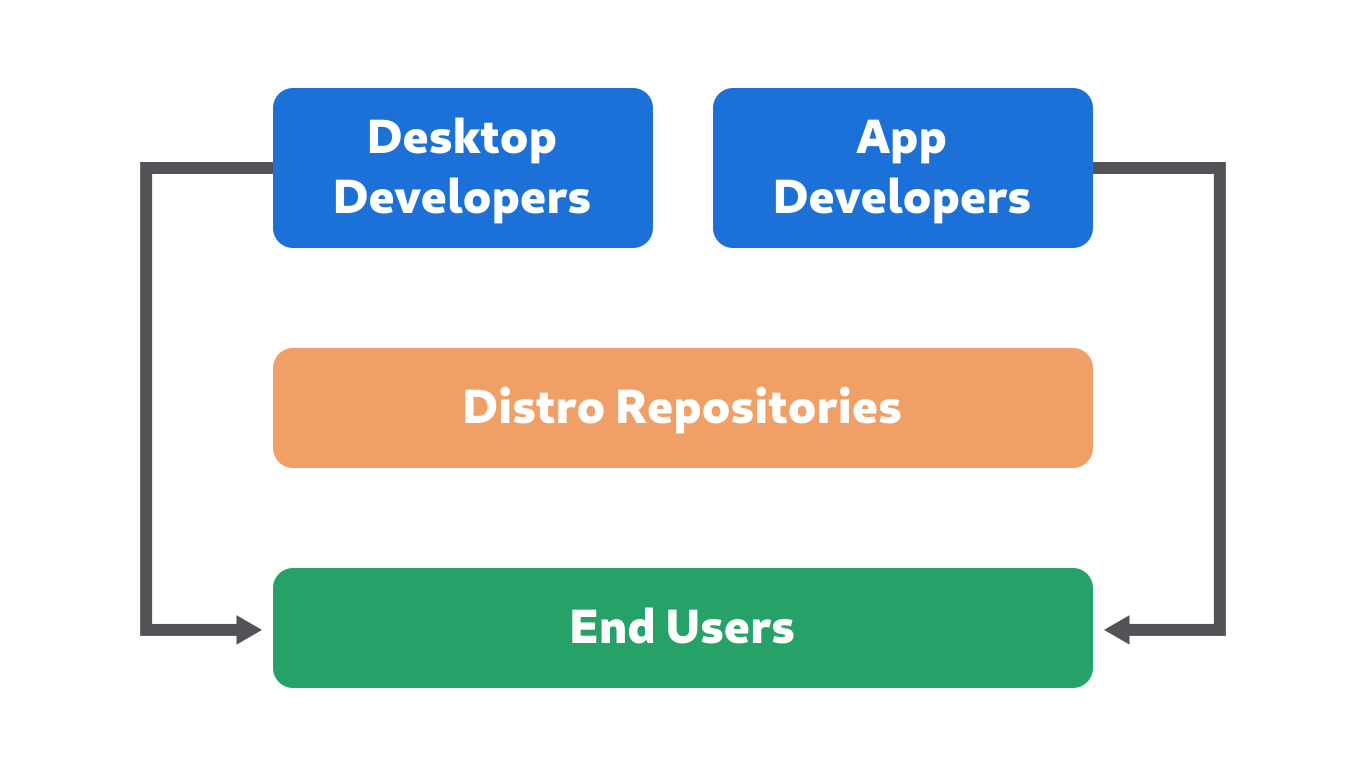

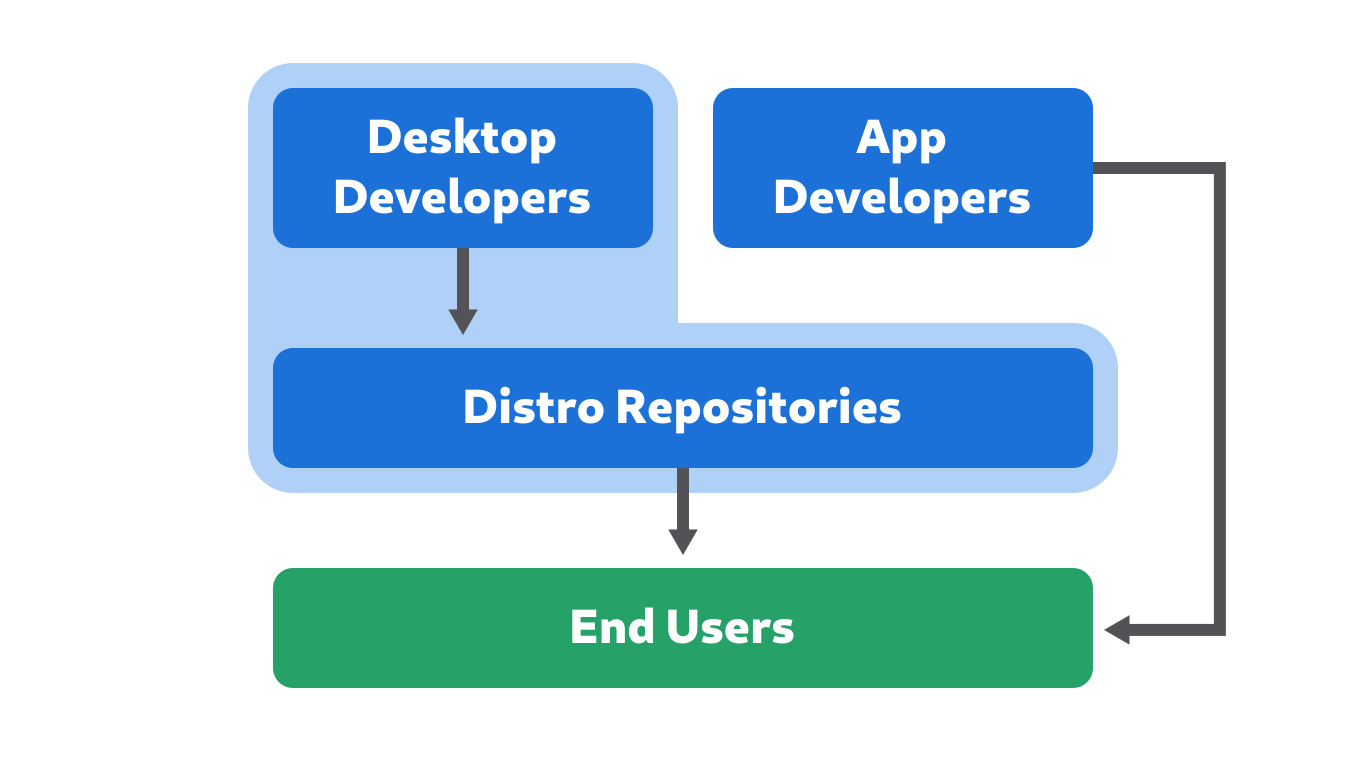

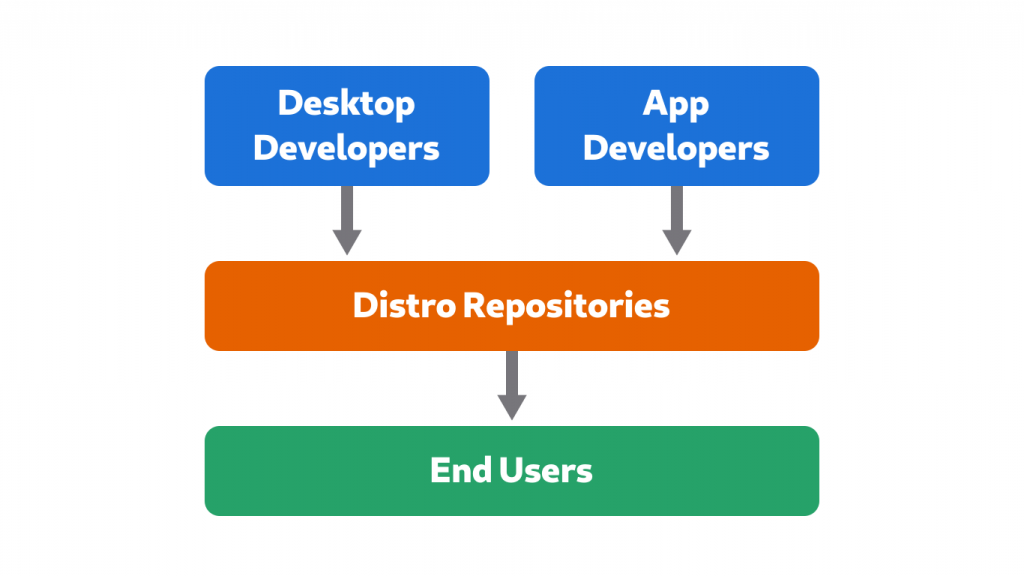

One advantage of GNOME’s current decentralized structure is its resilience. Having a small Foundation at the center which only handles infrastructure, and several independent companies and consultancies around it doing development means different parts are insulated from each other if something goes wrong.

If there are issues inside e.g. Codethink or Igalia, the maximum damage is limited and the wider project is never at risk. People don’t have to leave the community if they want to quit their current job, ideally they can just move to another company and continue most of their upstream work.

The same is not true of projects with a monolithic entity at the center. If there’s a conflict in that central monolith it can spiral ever wider if it isn’t resolved, affecting more and more structures and people, and doing drastically more damage.

This is a lesson we’ve unfortunately had to learn the hard way when, out of the blue, Sonny was banned last year. I’m not going to talk about the ban here (it’s for Sonny to talk about if/when feels like it), but suffice to say that it would not have happened had we not done the STF project under the Foundation, and many community members including myself do not agree with the ban.

What followed was, for some of us, maybe the most stressful 6 months of our lives. Since last summer we’ve had to try and keep the STF project running without its main architect, while also trying to get the ban situation fixed, as well as dealing with a number of other issues caused by the ban. Thousands of volunteer hours were probably burned on this, and the issue is not even resolved yet. Who knows how many more will be burned before it’s over. I’m profoundly sad thinking about the bugs we could have fixed, the patches we could have reviewed, and the features we could have designed in those hours instead.

This is, to me, the most important takeaway and the reason why I no longer believe the Foundation should be the structure we use to organize community development. Even if all the current operational issues are fixed, the risk of something like this happening is too great, the potential damage too severe.

What are the Alternatives?

If using the Foundation is too risky, what other options are there for organizing development collectively?

I’ve talked to people in our community who feel that NGOs are fundamentally a bad structure for development projects, and that people should start more new consultancies instead. I don’t fully buy that argument, but it’s also not without merit in my experience. Regardless though, I think everyone has also seen at one point or another how dysfunctional corporations can be. My feeling is it probably also heavily depends on the people and culture, rather than just the specific legal structure.

I don’t have a perfect solution here, and I’m not sure there is one. Maybe the future is a bunch of new consulting co-ops doing a mix of grants and client work. Maybe it’s new non-profits focused on development. Maybe we need to get good at Patreon. Or maybe we all just have to get a part time job doing something else.

Time will tell how this all shakes out, but the realization I’ve come to is that the current decentralized structure of the project has a lot of advantages. We should preserve this and make use of it, rather than trying to centralize everything on the Foundation.