Just at the beginning of this month I was invited to going to Bucharest, Romania, for giving a talk on GNOME at this year’s def.camp. The conference seems to be an established event in the Romanian security community and has been organised quite well. As I said in my talk I was happy to be there to tell those people about Free Software. I saw many people running around with their proprietary systems. It seems that certain parts of the security community does not believe that the security of a system greatly increases when it’s based on Free Software. In fairness, the event seemed to be a bit on the suit-and-tie-side where Windows is probably much more common than people want.

Andrei Avădănei opened the conference by saying how happy he was that, even at that unholy hour (09:00 in the morning…) he counted 1100 people from 30 countries and he expected that number to grow over the following hours. It didn’t feel that big, but the three halls were quite large indeed. One of those halls was the “hacking village” in which participants can practise real life “problem solving skills”. The hacking village was more of an expo where vendors had there booths but also some interesting security challenges. My favourite booth was the Virtual Reality demo. Someone brought an HTC VR system and people could play a simple game. I’ve tried an Oculus Rift before in which I road a roller coaster. With the HTC system, I also had some input methods which really enhanced the experience. Very immersive.

Anyway, Andrei mentioned, how happy he was to have the biggest security event in Romania being very grassroots- and community driven. Unfortunately, he then let some representative from Orange, the main sponsor, talk. Of course, you cannot run a big event like that without having enough financial backup. But then giving the main stage, the prime opening spot to the main sponsor does not leave the impression that they are community driven… I expected the first talk after the opening to be setting the theme for the conference. In this case, it was a commercial. That doesn’t actually fit the conference too badly, because out the 32 talks I counted 13 (or 40%) being delivered from sponsors. With sponsors I mean all companies listed on the homepage for their support. It may very well be that I am mistaking grassrooty supporters for commercial sponsors.

The Orange CTO mentioned that connectivity is the new electricity which shapes countries and communities. For them, a telco, in order to ensure connectivity, they need to maintain security, he said. The Internet of connected devices (IoT) is growing exponentially and so are the threats. Orange has to invest in order to maintain security for its client. And they do, it seems. He showed a fancy looking “threat map” which showed attacks in real-time. Probably a Snort (or whatever IDS is currently the en-vogue) with a map showing arrows from Geo-IP locations pointing towards Romania.

Next up was Jason Street who talked about how he failed doing his job. He was a blue team security guy, he said, and worked for a bank as security information officer. He was seen by the people as the bad guy making your life dreadful. That was bad, he said, because he didn’t teach the people the values and usefulness of information security. Instead he taught them that they better not get to meet him. The better approach, he said, is trying to be part of a solution not looking for problems. Empower the employees in what information security is doing or trying to do. It was a very entertaining presentation given by a very engaged speaker. I couldn’t get so much from the content though.

Vlad from Orange talked about their challenges providing an open, easy to use, and yet secure WiFi infrastructure. He referred on the user expectations and the business requirements. Users expect to be able to just connect without much hassle. The business seems to be wanting to identify the user and authorise usage. It was mainly on a high level except for a few runs of authentication protocol. He mentioned EAP-SIM and EAP-AKA as more seamless authentication protocols compared to, say, a captive Web portal. I didn’t know that it’s possible to use your perfectly valid shared secret in your SIM for authentication. It makes perfect sense. Even more so for a telco such as Orange.

Mihai from Bitdefender talked about Browser instrumentation for exploit analysis. That means, as I found out after the talk, to harness the Browser’s internals to analyse malicious payloads. He showed how your Browser (well… Internet Explorer with Flash) is exploited nowadays. He ran a “Cerber” demo of exploiting an Internet Explorer with some exploit kit. He showed fiddler and process explorer which displayed the HTTP traffic and the spawned processes. After visiting a simple Web page the malicious payload was delivered, exploited the IE, and finally crashed it. The traffic in fiddler revealed that the malware was delivered via a crafted Flash program. He used a Flash decompiler to look at the files. But he didn’t really find the exploit itself, probably because of some obfuscation. So what is the actual exploit? In order to answer that properly, you need to inspect the memory during runtime, he said. That’s where Browser instrumentation comes into play. I think he interposed several functions, such as document.write, eval, object parameters, Flash’s LoadBytes, etc to analyse what goes in and out. All that information was then saved to disk in separate files, i.e. everything that went to document.write was written to c:\share\document.write, everything that Flash’s loadbytes took, was written to c:\shared\loadbytes. He showed another demo with the Sundown exploit delivery framework which successfully exploited his browser. He then showed the filesystem containing the above mentioned information which made it easier to spot to actual exploit and shellcode. To prevent such exploits, he recommended to use Windows 10 and other browsers than Internet Explorer. Also, he recommended to use AdBlock to stop “malvertising”. That is in line with what I recommended several moons ago when analysing embedded JavaScripts being vulnerable for DOM-based XSS. The method is also very similar to what I used back in the day when hacking on Chromium and V8, so I found the presentation quite good. Except for the speaker :-/ He was looking at his slides with his back to the audience often and the audio wasn’t really good. I respect him for having shown multiple demos with virtual machine snapshots. I wouldn’t have done it, because demos usually fail! 😉

Inbar Raz talked about Tinder bots. He said he was surprised to find so many “matches” when being in Sweden. He quickly noticed that he was chatted up by bots, though, because he got sent the very same message from different profiles. These profiles also don’t necessarily make sense. For example, the name and the age shown on the Tinder profile did not match the linked Instagram or Facebook profiles. The messages he received quickly included a link to a dodgy Web site. When asking whois about the ownership he found many more shady domains being used for dragging people to porn sites. The technical details weren’t overly elaborate, but the talk was quite entertaining.

Raul Alvarez talked about reverse engineering polymorphic ransom ware. I think he mentioned those Locky type pieces of malware which lock your computer or files. Now you might want to know how that malware actually works. He mentioned Ollydbg, immunity debugger, and x64dgb as tools to use for reverse engineering your files. He said that malware typically includes an unpacker which you need to survive first before you’re able to see the actual malware. He mentioned on-demand polymorphic functions which are being called during the unpacking stage. I guess that the unpacker decrypts or uncompresses to different bytes everytime it’s run. The randomness is coming from the RDTSC call, he said. The way I understand that mechanism, the unpacker only modified a few bytes at a time and potentially modifies irrelevant bytes. Imagine code that jumps over a few bytes. These bytes could be anything, because they are never used let alone executed. But I’m not sure whether this is indeed the gist of what he described in a rather complicated fashion. His recommendation for dealing with metamorphic code is to catch it right when it finished decrypting the payload. I think everybody wishes to be able to do that indeed… He presented a general method for getting rid of malware once it hit you: Start in safe mode and remove suspicious registry entries for the “run” key. That might not be interesting to Windows people, but now I, being very ignorant about Windows, have learned something 🙂

Chris went on to talk about securing a mobile cryptocoin wallet. If you ask me, he really meant how to deal with the limitation of the platform of his choice, the iPhone. He said that sometimes it is very hard to navigate the solution space, because businesses are not necessarily compatible with blockchains. He explained some currencies like Bitcoin, stellar, ripple, zcash or ethereum. The latter being much more flexible to also encode contracts like “in the event of X transfer Y amount of money to account Z”. Financial institutions want to keep their ledgers private, but blockchains were designed to run in public, he said. In addition, trust between financial institutions is low. Bitcoin is hard to use, he said, because the cryptography itself is hard to understand and to use. A balance has to be struck between usability and security. Secrets, he said, need to be kept secret. I guess he means that nobody, not even the user, may access the secret an application needs. I fundamentally oppose. I agree that secrets need to be kept as securely as possible. But secrets must not be known by anyone else but the users who are supposed to benefit from them. If some other entity controls my secret, I am not really in control. Anyway, he looked at existing bitcoin wallet applications: Bither and Breadwallet. He thinks that the state of the art can be improved if you are willing to break the existing protocol. More precisely, he wants to leverage the “security hardware” present in current mobile devices like Biometric sensors or “enclaves” in modern CPUs to perform the operations based on the secret unextractibly stored in hardware. With such an enclave, he wants to generate a key there and use it to sign data without the key ever leaving the enclave. You need to change the protocol, he said, because Apple’s enclave uses secp256r1, but Bitcoin uses secp256k1.

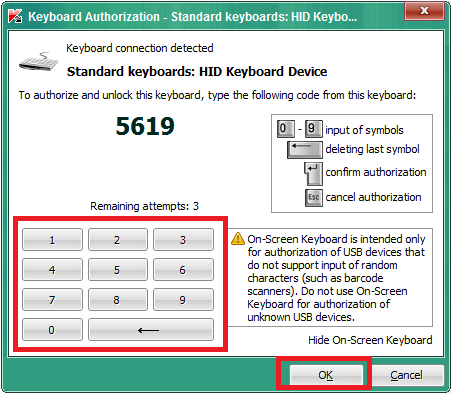

My own talk went reasonably well, I think. I am not super happy but happy enough. But I’ve realised a few times now that I left out things I wanted to mention or how I could have better explained what I wanted. Then again, being perfect would be boring, so better leave some room for improvement 😉 I talked about how I think GNOME is a good vendor of security software. It’s focus on user experience is it’s big advantage. The system should make informed decisions as much as possible and try to leave the user out as much as possible. Security should be an inherent feature, not something that you need to actively care about. I expected a more extreme reaction from the security focused audience, but it seemed people mostly agreed. In my mind, “these security people” translate security with maximum control placed in users’ hands which has to manifest itself in being able to control each and every aspect of a solution. That view is not compatible with trying to leave the user out of the security equation. It may be that I am doing “these security people” wrong. Or that they have changed. Or simply that the audience was not composed of the people I thought they were. I was hoping for developers creating security software and I mentioned that GNOME libraries would perform great for their tasks. Let’s see whether anyone actually takes my word for it and complains to me 😉

Matt Suiche followed “the money of security companies, IPOs, and M&A”. In 2016, he said, the situation is not very different from the 90s: Software still has bugs, bad configuration is still a problem, default passwords are still being used… The newly founded infosec companies reported by Crunchbase has risen a lot, he said. If you multiply that number with dollars, you can see 40 billion USD being raised since 1998. What’s different nowadays, according to him, is that people in infosec are now more business oriented rather than technically. We have more “cyber” now. He referred to buzzwords being spread. Also we have bug bounty programmes luring people into reporting vulnerabilities. For example, JP Morgan is spending half a billion USD on cyber security, he said. Interestingly, he showed that the number of vulnerabilities, i.e. RCE CVEs has increased, but the number of actual exploitations within the first 30 days after a patch has decreased. He concluded that Microsoft got more efficient at mitigating vulnerabilities. I think you can also conclude other things like that people care less about exploitation or that detection of exploitation has gotten worse. He said that the cost of an exploit has increased. It wasn’t long ago here you could cook up an exploit within two weeks. Now you need several people for three months at least. It’s been a well made talk, but a bit too fluffy for my taste.

Stefan and David from Kaspersky talked off-the-record (i.e. without recordings) about “read-world lessons about spies every security researcher should know”. They have been around the industry for more than a decade and they have started to notice patterns, they said. Patterns of weird things that happen which might not be easy to explain at first. It all begins with the realising that we live in a world, whether we want it or not, where we have certain control over the success of espionage attacks. Right now people reverse engineer malware which means that other people’s operations are being disrupted. In fact, he claimed that they reverse engineer and identify the world’s most advanced persistent threats like Duqu, Flame, Hellsing, or many others and that their company is getting better and better at identifying other people’s operations. For the first time in history, he said, we as geeks have an influence about espionage. That makes some entities not very happy and they let certain people visit you. These people come in various types. The profile of a typical government employee is that they are very open and blunt about their desires. Mostly, they employ patriotism to persuade you. Another type is the impersonator, they said. That actor is not perfectly honest with you. He gave an example of him meeting another person who identified with the very same name as him. It got strange, he said, when he met that person on a different continent a few months later and got offered to perform a highly paid training. Supposedly only to build up a relationship. These people have enough budget to get closer to you, they said, Another type of attacker is the “Banya Girl”. Geeks, they said, who sat most of their life in front of the computer are easily attracted by girls. They have it easier to get into your room or brain. His example took place one year ago: He analysed a satellite exploiting malware later known as Turla when he met this super beautiful girl in the hotel who sat there everyday when he went to the sauna. The day they released the results about Turla they went for dinner together and she listened to a phone call he had with a reporter. The girl said something like “funny that you call it Turla. We call it Uroboros”. Then he got suspicious and asked her about who “they” are. She came up with stories he found weird and seemed to be convinced that she knows more than she was willing to reveal. In general, they said, asking for a selfie or a Facebook friend request can be an effective counter measure to someone spying on you. You might very well ask what to do when you think you’re targeted. It’s probably best to do nothing, they said. It’s their game, you better not start playing it even if you wake up in the middle of it. You can try to take care about your OpSec to protect against certain data being collected or exfiltrated. After all, people are being killed based on metadata. But you should also try to not get yourself into trouble. Sex and money are probably the oldest weapons people employ against you. They also encouraged people to define trust and boundaries for existing and upcoming relationships. If you become too paranoid, you’ve already lost the battle, they said. Keep going to conferences, keep meeting people, and don’t close yourself down.

It were two busy days in Bucharest. I’m happy to have gone and I hope I will have another chance to visit the lovely city 🙂 By that time the links here in this post will probably be broken 😉 I recommended using the “archive” URLs, i.e. https://def.camp/archives/2016/ already now, but nobody is listening to me… I can also not link to the individual talks, because the schedule page is relatively click-intensive, i.e. not deep-linkable 🙁 …