Having spent 20 years of my life on Desktop Linux I thought I should write up my thinking about why we so far hasn’t had the Linux on the Desktop breakthrough and maybe more importantly talk about the avenues I see for that breakthrough still happening. There has been a lot written of this over the years, with different people coming up with their explanations. My thesis is that there really isn’t one reason, but rather a range of issues that all have contributed to holding the Linux Desktop back from reaching a bigger market. Also to put this into context, success here in my mind would be having something like 10% market share of desktop systems, that to me means we reached critical mass. So let me start by listing some of the main reasons I see for why we are not at that 10% mark today before going onto talking about how I think that goal might possible to reach going forward.

Things that have held us back

- Fragmented market

One of the most common explanations for why the Linux Desktop never caught on more is the fragmented state of the Linux Desktop space. We got a large host of desktop projects like GNOME, KDE, Enlightenment, Cinnamon etc. and a even larger host of distributions shipping these desktops. I used to think this state should get a lot of the blame, and I still believe it owns some of the blame, but I have also come to conclude in recent years that it is probably more of a symptom than a cause. If someone had come up with a model strong enough to let Desktop Linux break out of its current technical user niche then I am now convinced that model would easily have also been strong enough to leave the Linux desktop fragmentation behind for all practical purposes. Because at that point the alternative desktops for Linux would be as important as the alternative MS Windows shells are. So in summary, the fragmentation hasn’t helped for sure and is still not helpful, but it is probably a problem that has been overstated.

- Lack of special applications

Another common item that has been pointed to is the lack of applications. We know that for sure in the early days of Desktop Linux the challenge you always had when trying to convince anyone of moving to Desktop Linux was that they almost invariably had one or more application they relied on that was only available on Windows. I remember in one of my first jobs after University when I worked as a sysadmin we had a long list of these applications that various parts of the organization relied on, be that special tools to interface with a supplier, with the bank, dealing with nutritional values of food in the company cafeteria etc. This is a problem that has been in rapid decline for the last 5-10 years due to the move to web applications, but I am sure that in a given major organization you can still probably find a few of them. But between the move to the web and Wine I don’t think this is a major issue anymore. So in summary this was a major roadblock in the early years, but is a lot less of an impediment these days.

- Lack of big name applications

Adopting a new platform is always easier if you can take the applications you are familiar with you. So the lack of things like MS Office and Adobe Photoshop would always contribute to making a switch less likely. Just because in addition to switching OS you would also have to learn to use new tools. And of course along those lines there where always the challenge of file format compatibility, in the early days in a hard sense that you simply couldn’t reliably load documents coming from some of these applications, to more recently softer problems like lack of metrically identical fonts. The font for example issue has been mostly resolved due to Google releasing fonts metrically compatible with MS default fonts a few years ago, but it was definitely a hindrance for adoption for many years. The move to web for a lot of these things has greatly reduced this problem too, with organizations adopting things like Google Docs at rapid pace these days. So in summary, once again something that used to be a big problem, but which is at least a lot less of a problem these days, but of course there are still apps not available for Linux that does stop people from adopting desktop linux.

- Lack of API and ABI stability

This is another item that many people have brought up over the years. I think I have personally vacillated over the importance of this one multiple times over the years. Changing APIs are definitely not a fun thing for developers to deal with, it adds extra work often without bringing direct benefit to their application. Linux packaging philosophy probably magnified this problem for developers with anything that could be split out and packaged separately was, meaning that every application was always living on top of a lot of moving parts. That said the reason I am sceptical to putting to much blame onto this is that you could always find stable subsets to rely on. So for instance if you targeted GTK2 or Qt back in the day and kept away from some of the more fast moving stuff offered by GNOME and KDE you would not be hit with this that often. And of course if the Linux Desktop market share had been higher then people would have been prepared to deal with these challenges regardless, just like they are on other platforms that keep changing and evolving quickly like the mobile operating systems.

- Apple resurgence

This might of course be the result of subjective memory, but one of the times where it felt like there could have been a Linux desktop breakthrough was at the same time as Linux on the server started making serious inroads. The old Unix workstation market was coming apart and moving to Linux already, the worry of a Microsoft monopoly was at its peak and Apple was in what seemed like mortal decline. There was a lot of media buzz around the Linux desktop and VC funded companies was set up to try to build a business around it. Reaching some kind of critical mass seemed like it could be within striking distance. Of course what happened here was that Steve Jobs returned to Apple and we suddenly had MacOSX come onto the scene taking at least some air out of the Linux Desktop space. The importance of this one I do find exceptionally hard to quantify though, part of me feels it had a lot of impact, but on the other hand it isn’t 100% clear to me that the market and the players at the time would have been able to capitalize even if Apple had gone belly-up.

- Microsoft aggressive response

In the first 10 years of Desktop linux there was no doubt that Microsoft was working hard to try to nip any sign of Desktop Linux gaining any kind of foothold or momentum. I do remember for instance that Novell for quite some time was trying to establish a serious Desktop Linux business after having bought Miguel de Icaza’s company Helix Code. However it seemed like a pattern quickly emerged that every time Novell or anyone else tried to announce a major Linux desktop deal, Microsoft came running in offering next to free Windows licensing to get people to stay put. Looking at Linux migrations even seemed like it became a goto policy for negotiating better prices from Microsoft. So anyone wanting to attack the desktop market with Linux would have to contend with not only market inertia, but a general depression of the price of a desktop operating systems, and knowing that Microsoft would respond to any attempt to build momentum around Linux desktop deals with very aggressive sales efforts. So in summary, this probably played an important part as it meant that the pay per copy/subscription business model that for instance Red Hat built their server business around became really though to make work in the desktop space. Because the price point ended up so low it required gigantic volumes to become profitable, which of course is a hard thing to quickly achieve when fighting against an entrenched market leader. So in summary Microsoft in some sense successfully fended of Linux breaking through as a competitor although it could be said they did so at the cost of fatally wounding the per copy fee business model they built their company around and ensured that the next wave of competitors Microsoft had to deal with like iOS and Android based themselves on business models where the cost of the OS was assumed to be zero, thus contributing to the Windows Phone efforts being doomed.

- Piracy

One of the big aspirations of the Linux community from the early days was the idea that a open source operating system would enable more people to be able to afford running a computer and thus take part in the economic opportunities that the digital era would provide. For the desktop space there was always this idea that while Microsoft was entrenched in North America and Europe there was this ocean of people in the rest of the world that had never used a computer before and thus would be more open to adopting a desktop linux system. I think this so far panned out only in a limited degree, where running a Linux distribution has surely opened job and financial opportunities for a lot of people, yet when you look at things from a volume perspective most of these potential Linux users found that a pirated Windows copy suited their needs just as much or more. As an anecdote here, there was recently a bit of noise and writing around the sudden influx of people on Steam playing Player Unknown: Battlegrounds, as it caused the relatively Linux marketshare to decline. So most of these people turned out to be running Windows in Mandarin language. Studies have found that about 70% of all software in China is unlicensed so I don’t think I am going to far out on a limb here assuming that most of these gamers are not providing Microsoft with Windows licensing revenue, but it does illustrate the challenge of getting these people onto Linux as they already are getting an operating system for free. So in summary, in addition to facing cut throat pricing from Microsoft in the business sector one had to overcome the basically free price of pirated software in the consumer sector.

- Red Hat mostly stayed away

So few people probably don’t remember or know this, but Red Hat was actually founded as a desktop Linux company. The first major investment in software development that Red Hat ever did was setting up the Red Hat Advanced Development Labs, hiring a bunch of core GNOME developers to move that effort forward. But when Red Hat pivoted to the server with the introduction of Red Hat Enterprise Linux the desktop quickly started playing second fiddle. And before I proceed, all these events where many years before I joined the company, so just as with my other points here, read this as an analysis of someone without first hand knowledge. So while Red Hat has always offered a desktop product and have always been a major contributor to keeping the Linux desktop ecosystem viable, Red Hat was focused on the server side solutions and the desktop offering was always aimed more narrowly things like technical workstation customers and people developing towards the RHEL server. It is hard to say how big an impact Red Hats decision to not go after this market has had, on one side it would probably have been beneficial to have the Linux company with the deepest pockets and the strongest brand be a more active participant, but on the other hand staying mostly out of the fight gave other companies a bigger room to give it a go.

- Canonical business model not working out

This bullet point is probably going to be somewhat controversial considering I work for Red Hat (although this is my private blog my with own personal opinions), but on the other hand I feel one can not talk about the trajectory of the Linux Desktop over the last decade without mentioning Canonical and Ubuntu. So I have to assume that when Mark Shuttleworth was mulling over doing Ubuntu he probably saw a lot of the challenges that I mention above, especially the revenue generation challenges that the competition from Microsoft provided. So in the end he decided on the standard internet business model of the time, which was to try to quickly build up a huge userbase and then dealing with how to monetize it later on. So Ubuntu was launched with an effective price point of zero, in fact you could even get install media sent to you for free. The effort worked in the sense that Ubuntu quickly became the biggest player in the Linux desktop space and it certainly helped the Linux desktop marketshare grow in the early years. Unfortunately I think it still basically failed, and the reason I am saying that is that it didn’t manage to grow big enough to provide Ubuntu with enough revenue through their appstore or their partner agreements to allow them to seriously re-invest in the Linux Desktop and invest in the kind of marketing effort needed to take Linux to a less super technical audience. So once it plateaued what they had was enough revenue to keep what is a relatively barebones engineering effort going, but not the kind of income that would allow them to steadily build the Linux Desktop market further. Mark then tried to capitalize on the mindshare and market share he had managed to build, by branching out into efforts like their TV and Phone efforts, but all those efforts eventually failed.

It would probably be an article in itself to deeply discuss why the grow userbase strategy failed here vs why for instance Android succeeded with this model, but I think the short version goes back to the fact that you had an entrenched market leader and the Linux Desktop isn’t different enough from a Mac or Windows desktops to drive the type of market change the transition from feature phones to smartphones was.

And to be clear I am not criticizing Mark here for the strategy he choose, if I where in his shoes back when he started Ubuntu I am not sure I would have been able to come up a different strategy that would have been plausible to succeed from his starting point. That said it did contribute to even further push the expected price of desktop Linux down and thus making it even harder for people to generate significant revenue from desktop linux. On the other hand one can argue that this would likely have happened anyway due to competitive pressure and Windows piracy. Canonicals recent focus pivot away from the desktop towards trying to build a business in the server and IoT space is in some sense a natural consequence of hitting the desktop growth plateau and not having enough revenue to invest in further growth.

So in summary, what was once seen as the most likely contender to take the Linux Desktop to critical mass turned out to have taken off with to little rocket fuel and eventually gravity caught up with them. And what we can never know for sure is if they during this run sucked so much air out of the market that it kept someone who could have taken us further with a different business model from jumping in.

- Original device manufacturer support

THis one is a bit of a chicken and egg issue. Yes, lack of (perfect) hardware support has for sure kept Linux back on the Desktop, but lack of marketshare has also kept hardware support back. As with any system this is a question of reaching critical mass despite your challenges and thus eventually being so big that nobody can afford ignoring you. This is an area where we even today are still not fully there yet, but which I do feel we are getting closer all the time. When I installed Linux for the very first time, which I think was Red Hat Linux 3.1 (pre RHEL days) I spent about a weekend fiddling just to get my sound card working. I think I had to grab a experimental driver from somewhere and compile it myself. These days I mostly expect everything to work out of the box except more unique hardware like ambient light sensors or fingerprint readers, but even such devices are starting to land, and thanks to efforts from vendors such as Dell things are looking pretty good here. But the memory of these issues is long so a lot of people, especially those not using Linux themselves, but have heard about Linux, still assume hardware support is a very much hit or miss issue still.

What does the future hold?

So any who has read my blog posts probably know I am an optimist by nature. This isn’t just some kind of genetic disposition towards optimism, but also a philosophical belief that optimism breeds opportunity while pessimism breeds failure. So just because we haven’t gotten the Linux Desktop to 10% marketshare so far doesn’t mean it will not happen going forward. It just means we haven’t achieved it so far. One of the key identifies of open source is that it is incredibly hard to kill, because unlike proprietary software, just because a company goes out of business or decides to shut down a part of its business, the software doesn’t go away or stop getting developed. As long as there is a strong community interested in pushing it forward it remains and evolves and thus when opportunity comes knocking again it is ready to try again. And that is definitely true of Desktop Linux which from a technical perspective is better than it has ever been, the level of polish is higher than ever before, the level of hardware support is better than ever before and the range of software available is better than ever before.

And the important thing to remember here is that we don’t exist in a vacuum, the world around us constantly change too, which means that the things that blocked us in the past or the companies that blocked us in the past might no be around or able to block us tomorrow. Apple and Microsoft are very different companies today than they where 10 or 20 years ago and their focus and who they compete with are very different. The dynamics of the desktop software market is changing with new technologies and paradigms all the time. Like how online media consumption has moved from things like your laptop to phones and tablets for instance. 5 years ago I would have considered iTunes a big competitive problem, today the move to streaming services like Spotify, Hulu, Amazon or Netflix has made iTunes feel archaic and a symbol of bygone times.

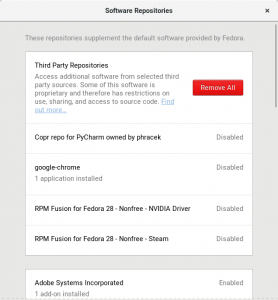

And many of the problems we faced before, like weird Windows applications without a Linux counterpart has been washed away by the switch to browser based applications. And while Valve’s SteamOS effort didn’t taken off, it has provided Linux users with access to a huge catalog of games, removing a reason that I know caused a few of my friends to mostly abandon using Linux on their computers. And you can actually as a consumer buy linux from a range of vendors now, who try to properly support Linux on their hardware. And this includes a major player like Dell and smaller outfits like System76 and Purism.

And since I do work for Red Hat managing our Desktop Engineering team I should address the question of if Red Hat will be a major driver in taking Desktop linux to that 10%? Well Red Hat will continue to support end evolve our current RHEL Workstation product, and we are seeing a steady growth of new customers for it. So if you are looking for a solid developer workstation for your company you should absolutely talk to Red Hat sales about RHEL Workstation, but Red Hat is not looking at aggressively targeting general consumer computers anytime soon. Caveat here, I am not a C-level executive at Red Hat, so I guess there is always a chance Jim Whitehurst or someone else in the top brass is mulling over a gigantic new desktop effort and I simply don’t know about it, but I don’t think it is likely and thus would not advice anyone to hold their breath waiting for such a thing to be announced :). That said Red Hat like any company out there do react to market opportunities as they arise, so who knows what will happen down the road. And we will definitely keep pushing Fedora Workstation forward as the place to experience the leading edge of the Desktop Linux experience and a great portal into the world of Linux on servers and in the cloud.

So to summarize; there are a lot of things happening in the market that could provide the right set of people the opportunity they need to finally take Linux to critical mass. Whether there is anyone who has the timing and skills to pull it off is of course always an open question and it is a question which will only be answered the day someone does it. The only thing I am sure of is that Linux community are providing a stronger technical foundation for someone to succeed with than ever before, so the question is just if someone can come up with the business model and the market skills to take it to the next level. There is also the chance that it will come in a shape we don’t appreciate today, for instance maybe ChromeOS evolves into a more full fledged operating system as it grows in popularity and thus ends up being the Linux on the Desktop end game? Or maybe Valve decides to relaunch their SteamOS effort and it provides the foundation for a major general desktop growth? Or maybe market opportunities arise that will cause us at Red Hat to decide to go after the desktop market in a wider sense than we do today? Or maybe Endless succeeds with their vision for a Linux desktop operating system? Or maybe the idea of a desktop operating system gets supplanted to the degree that we in the end just sit there saying ‘Alexa, please open the IDE and take dictation of this new graphics driver I am writing’ (ok, probably not that last one ;)

And to be fair there are a lot of people saying that Linux already made it on the desktop in the form of things like Android tablets. Which is technically correct as Android does run on the Linux kernel, but I think for many of us it feels a bit more like a distant cousin as opposed to a close family member both in terms of use cases it targets and in terms of technological pedigree.

As a sidenote, I am heading of on Yuletide vacation tomorrow evening, taking my wife and kids to Norway to spend time with our family there. So don’t expect a lot new blog posts from me until I am back from DevConf in early February. I hope to see many of you at DevConf though, it is a great conference and Brno is a great town even in freezing winter. As we say in Norway, there is no such thing as bad weather, it is only bad clothing.